ULTRA uses an individual's signature everywhere: memoisation, cache lookups, equality checks, population management... The quality of the hash matters, but so does the way the hash is produced.

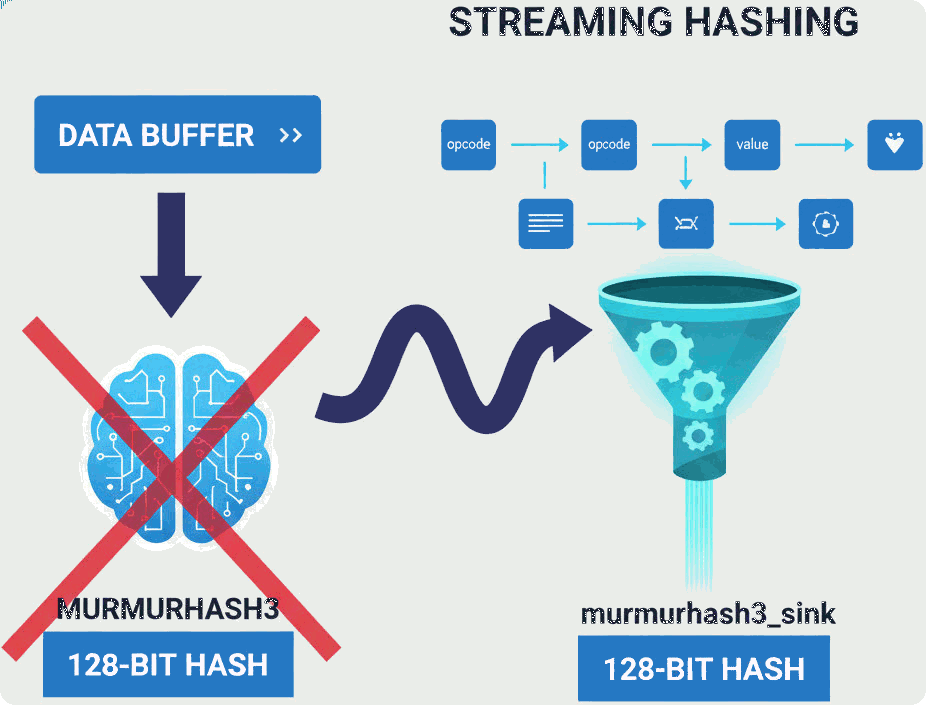

This post describes the transition from a monolithic, buffer-based hashing approach to a streaming, sink-based design and how that change naturally simplified the implementation of pack().

The original problem: hashing structured data

A genetic programming (GP) individual, in ULTRA, isn't a flat byte buffer. It's a structured object containing:

- opcodes and addresses;

- immediate values of different types;

- vectors and optional components;

- implicit ordering constraints.

To compute a stable signature, the object must first be linearised into a canonical byte sequence, a job handled by pack(), and then hashed (at the moment using MurmurHash3).

Originally, this followed a familiar pattern:

- build a temporary buffer of bytes;

- pass the entire buffer to

murmurhash3::hash128; - discard the buffer.

This works and GA/DE individuals, which are trivially packable, continue to follow this sequence (skipping point 1).

For GP individuals the approach carries a few drawbacks.

Why the buffer-based approach was suboptimal

While correct, the original design had some hidden costs:

- unnecessary memory traffic → bytes were written to a buffer only to be read again immediately;

- artificial coupling →

pack()had to care about storage, not just representation; - poor scalability → hashing large or nested structures required building equally large buffers;

- missed expressiveness →

MurmurHash3is inherently block-based, but the API forced a single-shot model.

These issues suggested that hashing should behave more like an output sink, consuming bytes incrementally, rather than as a single final transformation.

Rethinking MurmurHash3 as a sink

MurmurHash3 x64 128-bit naturally operates on:

- 16-byte blocks;

- a remaining tail;

- a finalisation step that depends on total length.

This maps almost perfectly onto a streaming abstraction.

The result was murmurhash3_sink, an incremental hasher with a minimal interface:

hash_sink h;

h.write(bytes1);

h.write(bytes2);

...

auto signature = h.final();

(write() accepts arbitrary byte sequences and can be called repeatedly)

Internally, the sink:

- accumulates partial blocks in a small fixed-size tail;

- processes full 128-bit blocks immediately;

- tracks total length for correct finalisation.

Crucially, the algorithm itself remains unchanged, only its presentation is different.

Separating responsibilities: pack() vs hashing

With a sink available, the responsibilities became clearer:

pack()defines the canonical byte representation of an individual, emits bytes in a well-defined order, knows nothing about hashing internals.murmurhash3_sinkconsumes bytes, appliesMurmurHash3incrementally, produces the final 128-bit hash.

This separation isn't just architectural purity, it has concrete benefits.

The new pack() in practice

Instead of appending to a container, pack() now streams data:

hash_sink h;

pack(start(), h);

return h.final();

pack() now receives a sink explicitly, making byte emission an external concern. Each element of the GP individual is encoded and written directly into the sink as soon as it's encountered.

This leads to:

- zero intermediate buffers;

- constant auxiliary memory;

- better cache behaviour;

- simpler control flow.

The logic of pack() is now entirely about what is written, not where.

A natural fit for modern C++

The sink-based design composes naturally with other abstractions: a different hash algorithm, a debug sink or even a serialisation sink can be plugged in with no changes to pack() itself.

Final thoughts

This refactor didn't change the observable behaviour of ULTRA at all.

Hashes remain identical, tests still pass and performance improves slightly due to reduced memory traffic.

Internally, the codebase is now clearer because pack() defines representation, the sink defines consumption and MurmurHash3 does what it does best: one block at a time.

Sometimes the most valuable refactors aren't about new features but about letting each piece of code do exactly one thing, well.